INSIGHT: Hybrid Intelligence for Space Robotics: Merging Classical and Learned Methods for Robust and Interpretable GNC

PI(s): Carlos Pérez del Pulgar (UMA) and Jorge Pomares Baeza (UAL)

Grant no. PID2024-160373OB

The field of space robotics is at a critical crossroad, balancing the growing demand for advanced capabilities with the current state, adoption, and maturation of emerging technologies. The advent of the New Space era has amplified the need for highly autonomous robotic systems across a diverse and expanding spectrum of applications. These applications include tasks ranging from autonomous inspection and repair of damaged satellites to extracting and transporting samples in unstructured planetary environments. A core unifying feature of these applications is their reliance on novel capabilities in autonomous guidance, navigation, and control (GNC) of robotic systems.

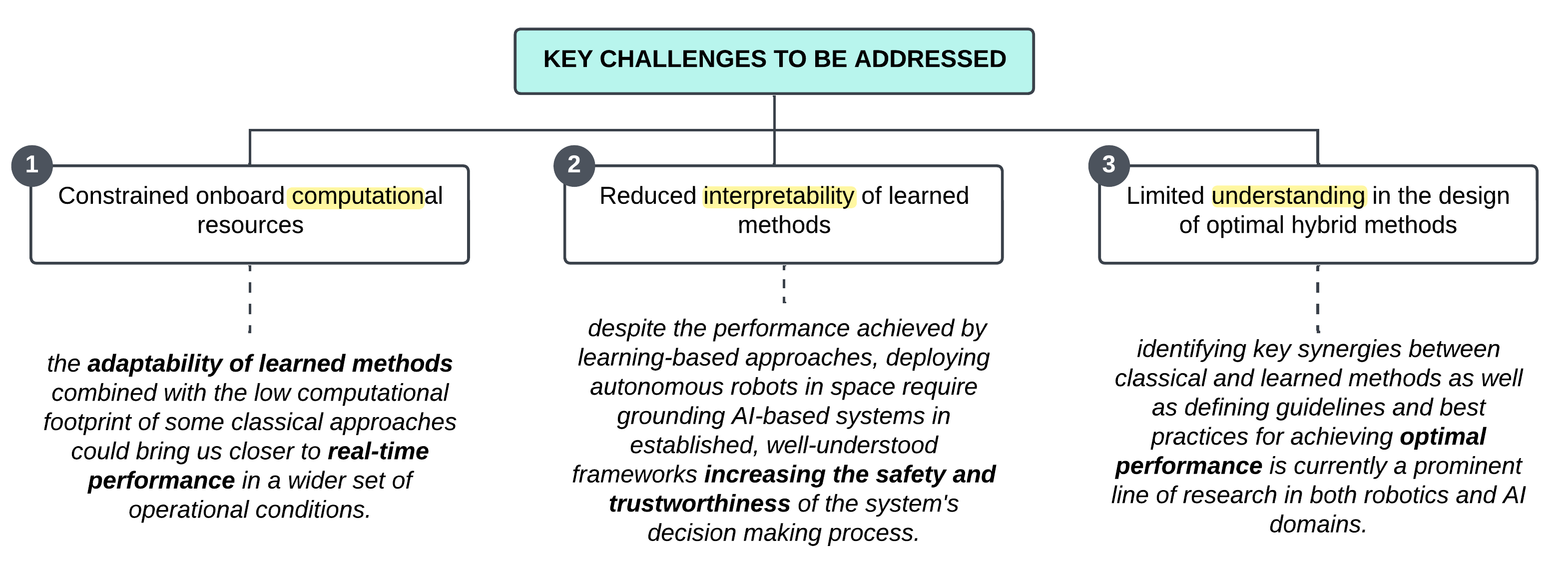

The challenges posed by operating in space and the constrained computational capacity of space-grade processors limit what can be achieved through conventional methods. However, the learned approaches that utilize neural networks offer significant potential to advance robotic autonomy. Yet, as we will explore, their safe and effective implementation in the strategic and risk-averse space sector introduces major challenges.

With this coordinated project, we seek to bridge classical and contemporary approaches across two foundational building blocks of a GNC stack: perception & navigation and motion planning & control, by researching and developing solutions that are applicable across both orbital and planetary domains. Such an endeavor demands a truly collaborative effort through complementary expertise (from classical optimization to learning-based methods), state-of-the-art infrastructure (from facilities that mimic microgravity to analog fields resembling planetary landscapes), and shared resources (from orbital simulators to planetary testbeds). The collaboration set forth in this proposal between the University of Malaga's Space Robotics Lab (UMA-SRL) and the University of Alicante's Human Robotics Research Group (UA-HURO) brings all the necessary pieces enabling us to effectively tackle this challenge in its entirety in a way that would have been impossible by a single group or university independently.

Project Goals

Through INSIGHT, we will address three fundamental problems related to the adoption of AI-enabled technologies in space robotics (outlined in Figure 1:

1) constrained onboard computational resources.

2) reduced interpretability of learned methods.

3) limited understanding in the design of optimal hybrid methods.

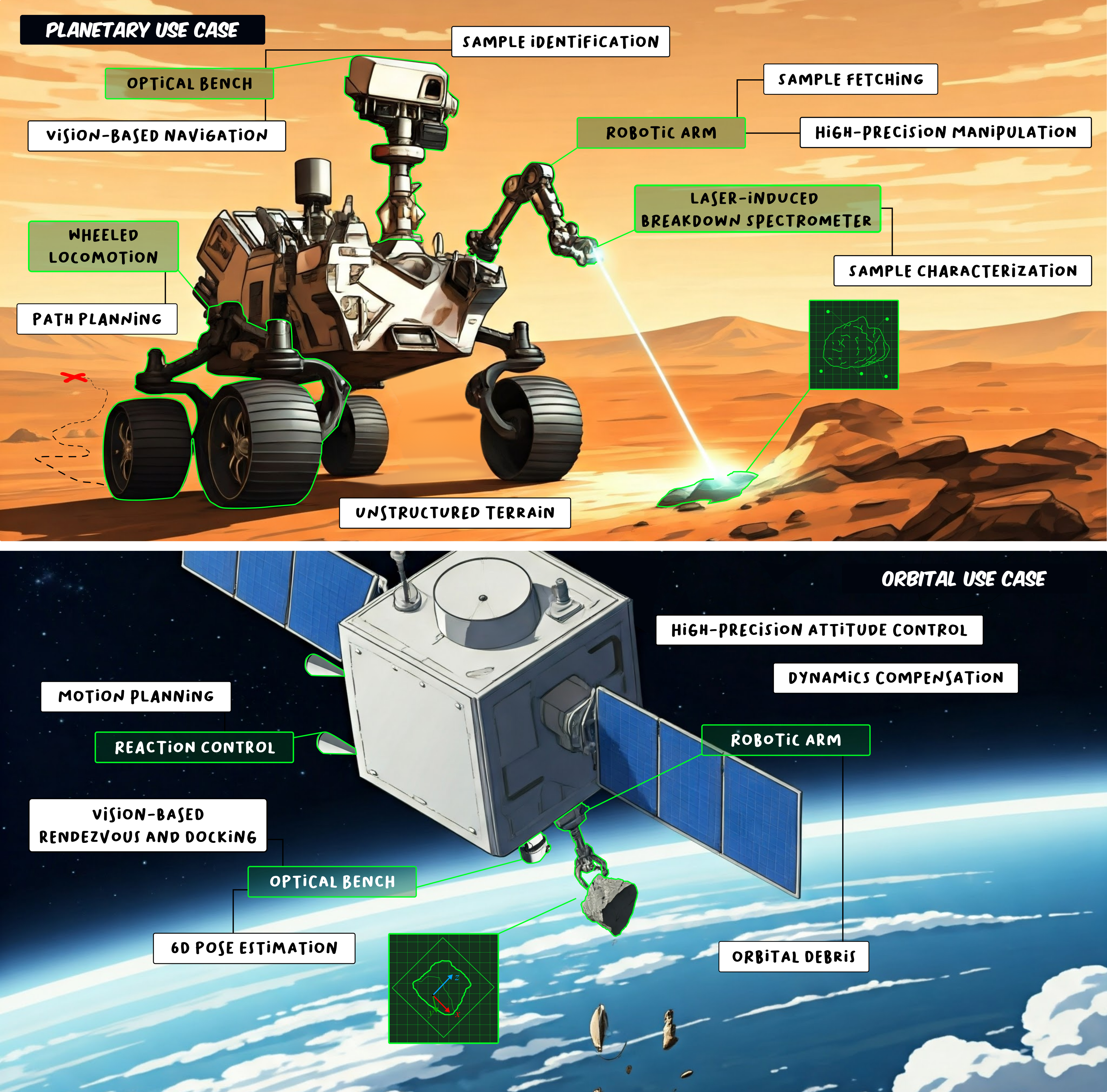

Effectively addressing these key problems and ultimately achieving the associated project objectives demands specific knowledge on Computer Science, Systems Engineering, Artificial Intelligence, Control Systems, and Robotics while requirements need to be drawn from areas such as Planetary Science, Geology, Astrobiology, Space Safety, and Sustainability. With this in mind, we have translated needs, challenges, and objectives into the pursuit of two mission-representative use cases that would allow us to fully explore the potential benefits and evaluate the suitability of hybrid approaches for space robotics. These use cases involve the autonomous location, analysis, and fetching of planetary samples and the autonomous identification, approximation, and grasping of orbital debris. Both use cases will be performed by highly actuated space robotic testbeds with advanced perception and precise manipulation capabilities. For each scenario, the operational environments will be precisely designed to mimic critical mission conditions and to test the limits of our solutions with edge cases designed around perceptually degraded and off-nominal situations. Through these two purposefully designed use cases, we will attempt to fulfill many of the robotic capabilities demanded by present technology roadmaps and upcoming mission needs, bridging the gap between traditional deterministic methods and data-driven intelligence.

With this project, we aspire to combine novel solutions in perception & navigation (Subproject 1 at UMA-SRL) with unique advancements in motion planning & control (Subproject 2 at UA-HURO). By regularly testing the performance of our pipeline in representative settings, such as analog planetary field tests (Subproject 1) and orbital simulation facilities (Subproject 2), we aim to showcase its transformative potential, establish best practices, and accelerate the adoption of AI-enabled solutions in space. More specifically, we have identified a series of critical enabling technologies and promising candidate methodologies to drive this leap into intelligent hybrid GNC methods and foster the integration of learning-based models in complex space robotic systems. These are outlined below, alongside a detailed review of the state of the art and our anticipated contributions.

In line with the strategic challenges, pressing needs, and unresolved questions defined herein, our \textbf{main objective} is to find optimal approaches to enhance the interpretability

of learned methods for the different sub-tasks involved in a GNC pipeline, while keeping a close guard on computational efficiency and performance.

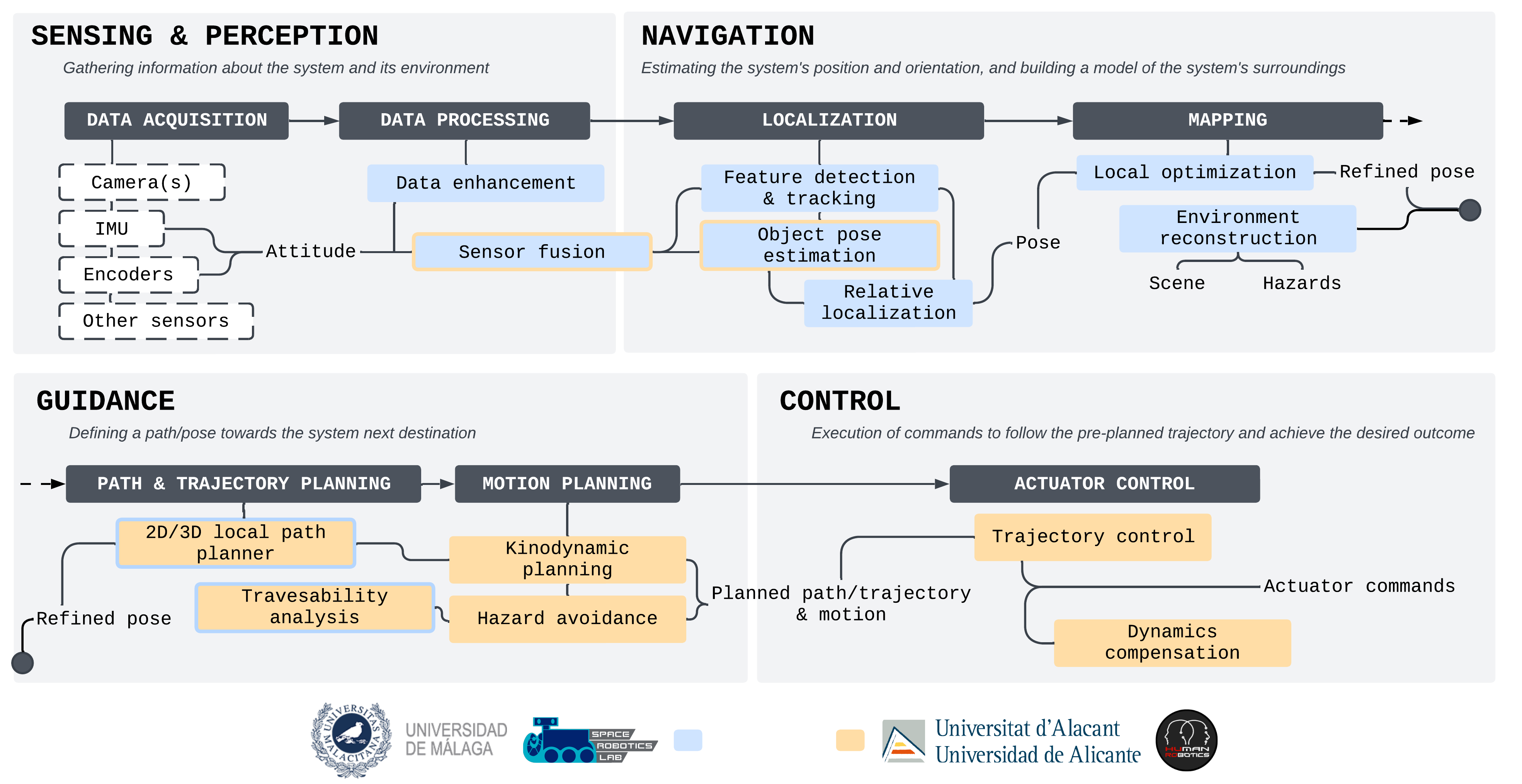

This general objective will be realized through the research of novel methods that combines traditional and data-driven techniques resulting in a set of modular and cross-domain building blocks for perception & navigation and motion planning & control, which altogether will shape the foundational layer of a new open-source GNC framework called INSIGHT or Interpretable Space Intelligence for GNC & Handling Tasks. INSIGHT's framework will be built on top of hybrid models for localization, mapping, motion planning, and control, which combine the robustness of classical models with the versatility, efficiency, and adaptability of data-driven methods. We will explore different model architectures based on specific tasks and integrate them all across planetary and orbital domains under the umbrella of the INSIGHT framework. INSIGHT will be purposefully designed to be modular, through dedicated building blocks that could be used independently or in combination among them or with other pre-existing frameworks to achieve the required level of automation. Each building block containing associated models, datasets, and validated performances will be made openly accessible to the public. In this way, to achieve the proposed general objective, we have defined the following specific objectives:

- Specific Objective 1 (SO1-UMA-SRL): Research the use of hybrid models to enhance vision-driven navigation tasks across perceptually degraded environments. We aim to enhance space robotic testbeds in both orbital and planetary domains for autonomous navigation, sample identification, precise characterization, collection, and object pose estimation and tracking under visually challenging scenarios. These include high dynamic range (on-orbit imaging), rapid changes in brightness (fast exposure adaptation), and photon-starved environments (lunar south-pole exploration). By integrating classical methods with learned models like convolutional neural networks and visual transformers, we will optimize sensor data fusion and explore recurrent networks for rover localization and orbital object tracking. We will train a self-supervised encoder to reduce computational complexity for efficient performance in computationally constrained hardware. Algorithms will be designed to be modular, forming dedicated building blocks that could be used independently or in combination among them or with other pre-existing frameworks to achieve the required level of automation. Models, datasets, and validated performances will be publicly accessible.

- Specific Objective 2 (SO2-UA-HURO): Hybrid models and CRL for robust motion planning and control in orbital and planetary environments. We will investigate hybrid models and CRL to enhance robustness, safety, and adaptability in robotic motion planning and control for non-deterministic space robotics environments. To advance the state of the art in motion planning and control for space robotics, we will design and implement a hybrid control strategy that integrates model-based methods for precision and learning-based methods for adaptability to address the unique challenges of motion planning and control in space environments. Additionally, we will research and adapt CRL frameworks suitable for motion planning and control of space robots, ensuring that neural network policies adhere to physical and operational constraints. These approaches will be adapted for space robotic scenarios. We will enhance the computational efficiency of the proposed control methods to ensure real-time applicability on space robots with limited onboard processing capabilities. The proposed techniques will be applied to specific scenarios, such as rover navigation and manipulation on planetary surfaces, as well as docking and manipulator control in microgravity.

- Specific Objective 3 (SO3-UMA&UA): Evaluate the performance, interpretability improvements, and feasibility of implementing hybrid methods in space through relevant use cases and demonstrations. We will systematically assess the performance of learning methods against established benchmarks to ensure they meet or exceed current standards. This includes analyzing their interpretability when combined with classical models. While deploying these models on space-qualified hardware is beyond the scope of this project, we will assess their feasibility for space applications by considering technical, computational, and practical constraints. We will validate INSIGHT through carefully selected use cases and mission-relevant demonstrations. The validation will involve quantitative and qualitative assessments to measure the framework's effectiveness, reliability, and adaptability, which will help us guide further refinements and establish confidence in the framework's practical utility.

These specific objectives are split between both teams (UMA-SRL & UA-HURO) according to their previous experience. In particular, UMA-SRL has previous experience in novel navigation methods for planetary exploration and will therefore be in charge of accomplishing SO1. UA-HURO has experience in researching methods for motion planning and control in orbital robotics and will be responsible for SO2. Finally, SO3 is achieved by combining both teams, integrating both navigation and motion planning & control approaches to perform demonstrations through relevant use cases.

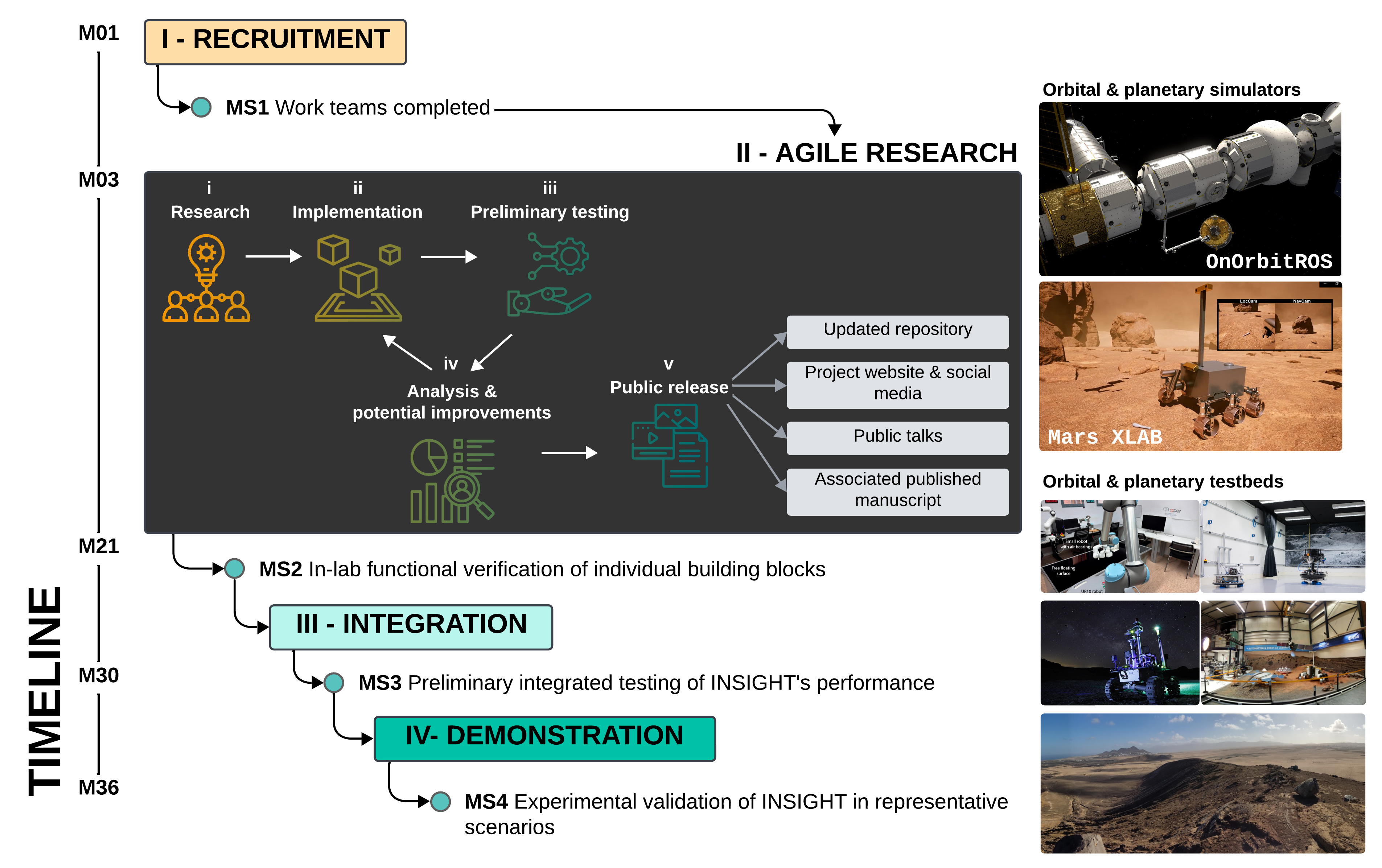

To accomplish the project main objective and its corresponding subobjetives, we will follow a multistage methodology outlined in the figure below. This approach has been previously tested through former projects and proven to support the successful execution of tasks and milestones in high-risk, high-reward projects like INSIGHT, where early critical discoveries and performance benchmarks shape research directions and guide teams' efforts in subsequent project stages.

As part of this approach, we have devised an initial 3-month Recruitment Phase, required to onboard the new members of the work teams associated with each subproject's open contracts. With the teams completed, we will accomplish the first project milestone (MS1). After this initial phase, the project moves into an 18-month Agile Research Phase. Drawing inspiration from a standardized agile methodology widely adopted in software engineering, we have tailored our own incremental research approach that blends scientific rigor with rapid deployment and testing cycles. This five-stage iterative process starts with foundational research on novel solutions, followed by designing and implementing basic functionality for each of the building blocks comprising our hybrid GNC pipeline and rapid testing these components using software- and hardware-in-the-loop simulations as appropriate.

This phase finishes when in-lab functional verification of individual building blocks (MS2) is performed, therefore, accomplishing SO1 and SO2.

For software-in-the-loop testing, we will use immersive simulation platforms formerly developed by UMA-SRL and UA-HURO teams to faithfully recreate not just the operating environments but the kinematics and dynamics of the robotic systems involved. UMA-SRL developed a simulator called Mars Xlab based on Unreal Engine 5 and Vortex Studio to design high-fidelity virtual 3D environments and simulate rover operations prior to real-world testing. UA-HURO engineered a unified open-source framework for space-robotics simulations, called OnOrbitROS. OnOrbitROS is based on Robot Operating System (ROS) and includes and reproduces the principal environmental conditions that space robots and manipulators could eventually experience in an on-orbit servicing scenario. Using these simulation models, we will perform preliminary evaluations of the building blocks for perception, navigation, motion planning, and control. Similarly, we will devise tests in which much of the information gathered by the system is simulated, including extero- and interoceptive sensor signals, and fed into real hardware (e.g., robotic arm or rover testbed). These tests will evaluate high- and low-level control approaches in realistic scenarios prior to deploying them in the final system. This dual approach is essential to narrow the gap between ideation, research, implementation, and testing, especially given the complexity of the robotic systems involved in the project.

To maximize compatibility with existing methods and to guarantee the usability of the developed building blocks, we will use ROS. Leveraging ROS as the backbone of the INSIGHT GNC architecture streamlines deploying verified libraries and methods in real-world scenarios and simplifies the integration of solutions from both subprojects during the upcoming phase of the project.

In-lab testing, ad-hoc and ongoing rather than milestone-based, bridges the gap between concept and verification. Early and ongoing evaluations will provide insights to iteratively refine our approaches until they meet defined performance benchmarks. The performance of each building block will be evaluated against the key performance metrics established collaboratively by subproject teams at the start of the research phase. We will publish validated building blocks, associated data sets, and their documentation in public repositories under an MIT or an Open-Source Hardware (OSHW) license via open platforms such as Zenodo and Github. This ensures that the project's outcomes are freely available for use, modification, and distribution without restrictions, fostering collaboration and innovation within the broader research community. The Agile Research Phase represents the primary focus of our efforts and is crucial to the success of this project.

Once each building block of the INSIGHT pipeline has been tested and its functionality verified, research outputs from both UMA-SRL and UA-HURO teams will be integrated during the Integration Phase. This phase will produce the first complete, cross-domain, hybrid GNC framework for space robotics. The modular design and incremental testing of the foundational INSIGHT modules—--perception & navigation and motion planning & control—--in the ROS framework will significantly simplify integration, requiring only minor adaptations in most cases. These adaptations will take place through two research stays. An initial 4-month stay of a member of UA-HURO's team at UMA will be focused on integrating and adapting planning and control methods for planetary scenarios, followed by a second 4-month stay of a UMA-SRL team member this time at UA and aimed at integrating UMA's navigation methods for an in-orbit servicing context.

After this integration, MS3 will be achieved.

The tests conducted thus far will have evaluated individual aspects of the functionality expected of the final system. Some capabilities will have been assessed in isolation (e.g., sample identification and grasping without platform motion), while others will have been evaluated in digital simulators or simplified test setups (e.g., motion dynamics compensation upon docking in an orbital simulator). It will not be until the last Demonstration Phase when the full INSIGHT pipeline performance will be validated through end-to-end demonstrations attempting to mimic the real operating conditions of upcoming planetary exploration (led by UMA-SRL) and on-orbit servicing missions (led by UA-HURO).

Once this phase finishes, MS4 and SO3 will be accomplished.

Timeline

-

Start date: September 2025

-

Duration: 36 months

News

Project outcome

Conference & workshop contributions

Other publications, datasets, and code resulting from this project will be listed here.